One major focus of this HPC section will be a detailed walkthrough of building and configuring a four-node Raspberry Pi cluster. This will include static networking, SSH automation, MPI programming, file sharing through NFS, and performance testing tools such as HPL.

• Built and configured a Raspberry-Pi compute cluster including MPI, static-IP networking, SSH key authentication, and NFS shared-filesystem integration.

• Benchmarked cluster performance using HPL and performed workload-distribution tuning to evaluate scaling efficiency.

• Competed in the 2025 SBCC Small-Board Cluster Competition, operating an ~11-node cluster and running distributed workloads including password-cracking and ParFEMWARP. Completed single-node execution and diagnosed multi-node MPI failures; placed Top-3 in systems interview.

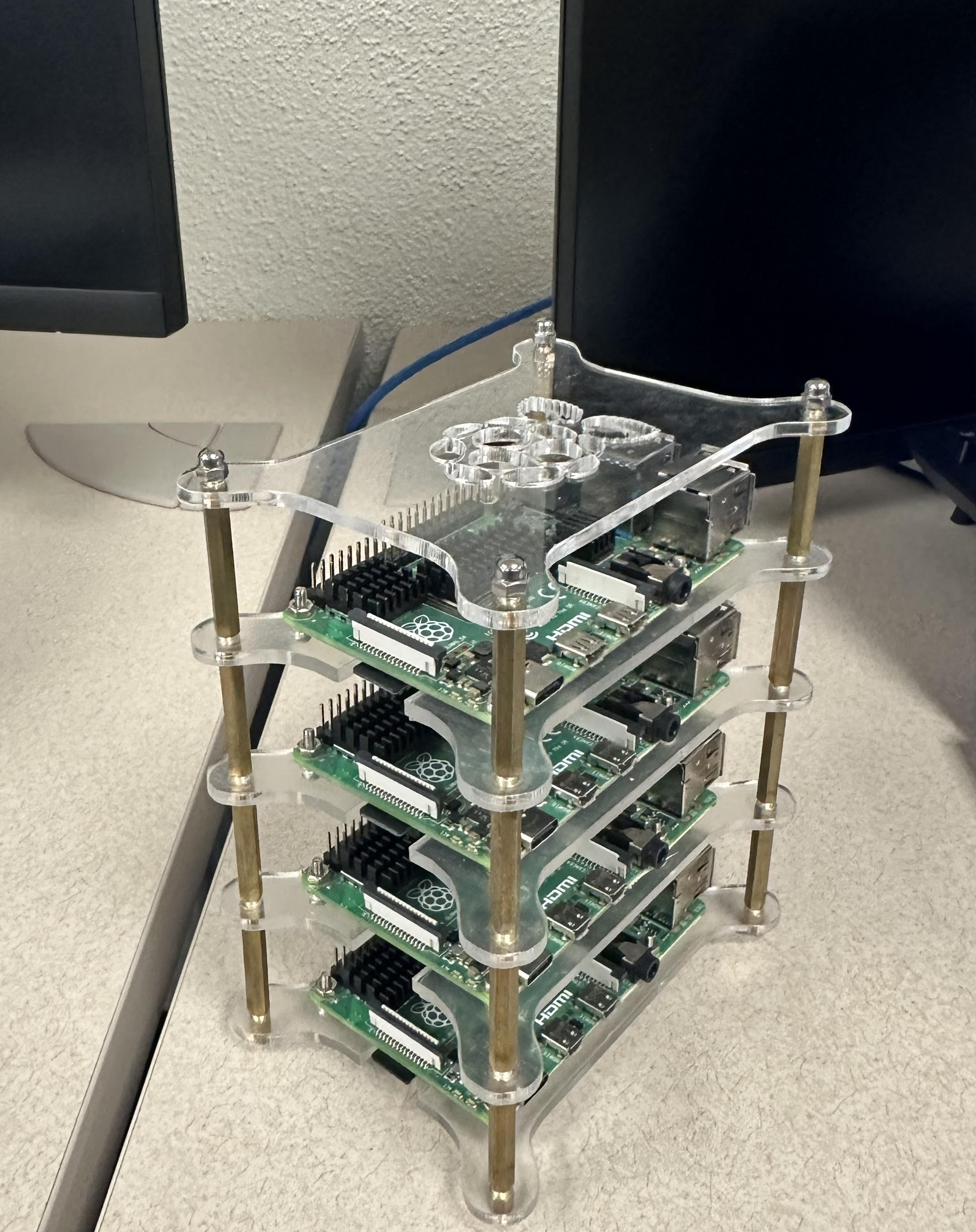

During the course of the Spring 2025 semester, the main system design used was a four-node Raspberry Pi cluster designed to model the core architecture of a distributed-memory high-performance computing system. Each node in the cluster had its own independent CPU, memory, storage, and Linux image, while inter-node communication was handled through a dedicated Ethernet switch. Together, the nodes formed a private local network for distributed workloads.

One node (typically the top unit in the stack) functioned as the head node. The head node coordinated the other three worker nodes by managing SSH access, distributing jobs, orchestrating file transfers, and exporting shared resources (e.g., the NFS shared filesystem) used across the cluster.

In a distributed-memory model, each node operates as its own independent machine rather than sharing a single memory space. Any multi-node workload required the coordination between nodes, and the network between the nodes acted as a backplane that connected the compute power of multiple Pi nodes together. The dedicated switch used in junction with the cluster provided isolated communication between nodes, while the head node coordinated the sharing of resources and provided the unification of the work environment across the cluster. This setup replicates on a small scale how larger HPC systems separate computer responsibilities across many nodes in research or real-world industry practices.

Message Passing Interface (MPI) was set up after the NFS system and acted as the core programming model used to make the distributed-memory cluster behave like a single parallel system. Each Raspberry Pi had its own private memory; this meant that processes could not share variables directly and had to coordinate by sending messages over the network. MPI provided a structure for launching the same program, code, or application across multiple nodes. This was done by assigning each process a rank and supporting data exchange and synchronization through basic operations like send/receive. The head node served as the launching and directing point for the MPI jobs, similar to it controlling other processes.

High-Performance Linpack (HPL) was used as the primary benchmark to quantify the cluster's compute throughput and estimate how well performance would scale as additional nodes and processes were introduced. HPL primarily solves a large system of linear equations using LU factorization, making it a standard way to measure performance in FLOPS under a hybrid mix of computation and communication. Running HPL on the four-node cluster showed the tradeoff between compute capability and the overhead cost of communication in a distributed-memory system like a cluster, where performance depends on raw CPU speed but also efficient data exchange and design. Parameters such as block size (NB) and process grid layout (P×Q) are typically tuned to adjust a system's data exchange design.